Benchmarking for hydro in changing energy markets

29 February 2008Benchmarking can improve performance in a range of applications including hydro plants, explains Eric Tiffany, senior vice president – power generation at HSB Solomon Associates

Benchmarking, a tested and proven business management and optimisation practice, has been used in various industries for many years. There are many conceptions of benchmarking, all of them involving the emulation of practices, programmes and processes of companies that are the leaders their industries. Through an understanding of how data could or should be used to drive performance, the energy industry has developed a powerful means of maintaining and improving performance of capital-intensive assets.

For almost 30 years, energy market segments have used Solomon’s methodology in a variety of ways. Some companies have integrated it into their culture such that executives’ compensation are, in part, tied to the results. Tailored to the individual nuances and operating characteristics of the particular asset, the success of the approach with other segments of the energy industry sets the stage for the benchmarking of hydropower assets.

One reason benchmarking and comparative analysis processes are still used as management tools after more than 25 years is that markets and competitive forces are continually changing. Such change within the hydroelectric industry in the US is evident in the upcoming mandatory reliability data reporting for hydro plants with the passage of the Energy Policy Act of 2005. While final implementation is still pending, it is likely that any generating unit connected to the high-voltage electric grid will be required to report reliability to the North American Electric Reliability Corporation (NERC). Furthermore, Europe is currently undergoing consolidation as well as the need to meet increasing capacity demands with an emphasis on renewable or green energy assets. Considering such changes, present in markets worldwide, it is imperative to know how good you are, if you can get better, and, if so, by how much. How will you know?

Through Solomon’s work with CEATI International Inc., borne out of the Canadian Electric Association (CEA’s) research and development programme, we are seeing North American hydropower assets responding to such change as a result of market pressures. However, change for its own sake is not warranted.

Solomon’s approach to hydro assets provides a methodical, proven, objective way to respond to changes in the market by providing a proven means of assessing operations, analysing valid data on a consistent, accurate, and confidential basis. The company employs sophisticated normalisation and other methodological techniques to extract the most insight out of the analysis.

Challenges facing hydro generators may include the need to:

• Change the operating regime in response to deregulation, which impacts the reliability and life of units.

• Demonstrate how well operations are optimising the value of assets in support of a transaction.

Regardless of the question, in the end data are needed that are accurate and comparative in nature.

The sheer number of assets in the power generation segment makes it different from some other segments in the energy industry. Recognising the differences and knowing how they are operated has been a key part of the approach to benchmarking power assets developed by Solomon - a process called Management-By-Exception, whereby a company spends as few resources as possible to extract the most valuable information. Detailed benchmarking of every asset in a power generation fleet is not recommended, but rather a focus on those with which there is going to be the greatest opportunity or the biggest need. Most personnel know their plants and understand where the issues exist; benchmarking only offers a way to quantify the circumstances and capture opportunities.

There is considerable wisdom in the statement, “If you do not know where you are going, any road will take you there.” In a benchmarking context, the ability to measure performance and identify potential improvement opportunities provides the road map to set realistic targets and plans to capture those opportunities. Benchmarking can be used to allocate human and working capital resources, evaluate the impact of changing operating modes, and predict operating and maintenance costs based on known cost drivers, amongst others. Understanding the cost drivers within a regional market/commercial structure allows the business to be optimised for those drivers, within the constraints of safety and environmental stewardship.

How good is good?

A logical first step along the path towards performance improvement involves answers to the questions “How good is good?” and “Where do we rank?” Since the energy industry is dominated by engineers, the answers (and their implications) are not derived readily and without scrutiny, and it is not enough to simply emulating the practices or results of the top performers. Instead, we consider more critically and analytically the efficacy of the comparisons themselves because of the intended consequence of the conclusions – pursuing action and committing resources and money.

Before the question “How good is good?” can be answered, the benchmarking methodology must exhibit several key characteristics to gain acceptance by users. The main characteristics of Solomon’s methodology are:

• Consistency – What is considered maintenance cost and what is considered capital spend? How are overhauls or turnarounds considered by the comparisons? Many asset owners have different opinions as to how to treat these issues. However, ensuring that interpretations are minimised and the data are consistent according to strict definitions contributes to their utility.

• Confidentiality – asset owners tend to be more willing, careful, and accurate in providing data if they know that it will be held in confidence.

• Validity – Ensuring that the data submitted makes sense from engineering, operations, and commercial perspectives. For example, we work to validate the data by former executives and managers who were historically responsible for the assets. Such attention to detail increases the likelihood of accuracy and preserves the integrity of the database.

These characteristics must be evident to truly discern how “good” the good really is, and from this basis other, more sophisticated, analyses can be developed.

Apples-to-apples

“I’m different” or “You cannot compare me to them” are common responses to benchmarking comparisons. Selecting the appropriate peer comparisons among those with similar business models, technology, configuration, economies of scale, and utilisation must be considered to develop the most useful comparisons. More often than not, there is no exact match to an asset. Site requirements, ambient conditions, technology age differences and economies of scale contribute toward the difficulty in developing a homogeneous comparison group. Selecting too large and diverse a peer group could result in many data adjustments, introducing variability, and diminish the integrity of the analysis; too small a peer group, on the other hand, is unlikely to provide statistically valid data.

Understanding the potential pitfalls in the comparative analysis is crucial to providing meaningful comparisons.

Additionally, models or techniques have been developed to normalise complexity differences between facilities and provide another distinct means (and perspective) for assessing performance. Simple metrics such as throughput or utilisation could not adequately describe the cost dynamics, which provide motivation to develop normalisation factors. For the energy industry, Solomon has developed normalisation factors, which gauge the complexity of an asset and how much bearing that complexity such a factor has on performance.

Equivalent Generation Complexity (EGC) is a metric derived from Solomon’s patented methodology that normalises the cost drivers as a function of size, technology, operating hours, starts, age, etc. to allow for the prediction of total cost less fuel. As this analytic capability is brought to the hydro sector, it can be noted that elsewhere in the energy industry that EGC has been shown to be a good predictor, for both operations staff as well as management in headquarters.

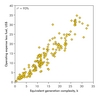

As shown in Figure 1, for fossil-fired generation, EGC is a good predictor of the cost of operations (less fuel cost) across all types of fossil-fired technologies with a coefficient of determination (r2) over 93%. Such a correlation is much greater than what we have found using installed capacity (r2 of 65%) or energy (r2 = 73%) and thus, is a better metric for normalising differences.

In today’s carbon-constrained business environment, a further metric we have - Carbon Emission Intensity (CEI) - is a useful measure that has been developed to measure greenhouse gas emissions (GHGs), albeit in a non-generation industry - refinery.

Given a certain combination of technology, size, etc., the generation metric, EGC, is used to determine the appropriate level of spending, the impact of fuel quality on spending, and the quantification of the impacts of maintenance spending on reliability. As EGC has gained acceptance, as we use it in our analysis, power plant managers and executives alike feel vindicated or validated in hearing what they already know - especially if they are under-spending as a result of corporate budget cuts and they have been cautioning that reliability of their units will invariably suffer as a result. EGC simply provides a quantification of this effect, providing a prediction of when such deterioration in reliability may occur (typically two to three years should a generator not alter its below-par spending practices.

Helping To Improve Hydro Assets

We have only begun to explore the numerous application of EGC. As our database of operational and financial performance of hydro assets grows, we intend to develop EGC for the hydroelectric industry and provide a tool that will help in the management and improvement of those assets.

Considering the amount of effort that goes into the process and methodology, the analysis is relatively easy. There are numerous graphs and charts typically produced from a benchmarking effort that are used to assess the overall performance of the asset. Benchmarking is not an exact science, but rather it is an invaluable tool to integrate several different perspectives and recognise some of the inherent trade-offs within a capital intensive asset. For example, it is our experience that an asset cannot be the best in every performance category – availability, reliability, cost, and efficiency reliability – all at the same time.

Although we produce metrics that span every area of the operation, one of the more recent developments has been in the area of operational risk, and although it is for a fossil-fired power plant it is useful generically to note its potential for the hydro sector.

Constant risk is the product of the frequency and severity of problems, that is, equating high-probability/low-cost with low-probability/high-cost events. With this analysis, managers can determine the levels of risk that need to be carried throughout the plant and where working capital resources should be applied.

Another example of how to use the data is to characterise reliability in commercial terms. Comparing outages at times when markets were paying a premium as determined by hub-prices (or a proxy), results in an economic means to describe the value of the outage – or lost revenue opportunity (LRO). Despite the fact that trading positions might have otherwise hedged against the sale of any given megawatt, such an analysis of “Commercial Unavailability” demonstrates the criticality of evaluating reliability and availability and helps pinpoint and prioritise problem areas.

No Geographical Boundaries

Some may argue that you cannot compare assets in different markets with different circumstances involving different currencies, but reliability (or technical performance) knows no geographical boundaries. The use, then, of local hub-pricing is intended as an additional analytical layer by a generator to provide a economic context; it is not meant to accurately characterise currency and other market risk.

Simply put, comparing units to others along LRO demonstrates how you are addressing reliability in a commercial context, which is critical in a deregulating environment.

Benchmarking is a first step to understanding asset performance and it may also guide investment possibilities, whether new build locally or not. Many large utilities feel they don’t need to benchmark with external companies or generating units. However, as competitive forces take hold, it could be similar assets elsewhere are performing better. Regardless of your market or current situation, why not find out how good you truly are, establish a means to set improvement goals and go after them?